DataScience Online Training

-

37

-

12

-

Online

Our Data science Course in Chennai will help you to deal with vast volumes of data using modern tools and techniques to find unseen patterns, derive meaningful information, and make business decisions effectively.

Our Data Science Course in Chennai will help you to learn components of the Data Science lifecycle such as Big data and Hadoop, Machine Learning and Deep Learning, R programming. The instructor will teach you how to adopt a blend of mathematics, business acumen, tools, algorithms and machine learning techniques. You’ll also learn how to handle the large amount of data information and implement it according to the organizations business strategy

Syllabus of Data Science Course

Module 1: Introduction to Data Science with R

- What is Data Science, significance of Data Science in today’s digitally-driven world, applications of Data Science, lifecycle of Data Science, components of the Data Science lifecycle, introduction to big data and Hadoop, introduction to Machine Learning and Deep Learning, introduction to R programming and R Studio.

- Hands-on Exercise – Installation of R Studio, implementing simple mathematical operations and logic using R operators, loops, if statements and switch cases.

Module 2: Data Exploration

- Introduction to data exploration, importing and exporting data to/from external sources, what is data exploratory analysis, data importing, dataframes, working with dataframes, accessing individual elements, vectors and factors, operators, in-built functions, conditional, looping statements and user-defined functions, matrix, list and array.

- Hands-on Exercise -Accessing individual elements of customer churn data, modifying and extracting the results from the dataset using user-defined functions in R.

Module 3: Data Manipulation

- Need for Data Manipulation, Introduction to dplyr package, Selecting one or more columns with select() function, Filtering out records on the basis of a condition with filter() function, Adding new columns with the mutate() function, Sampling & Counting with sample_n(), sample_frac() & count() functions, Getting summarized results with the summarise() function, Combining different functions with the pipe operator, Implementing sql like operations with sqldf.

- Hands-on Exercise –Implementing dplyr to perform various operations for abstracting over how data is manipulated and stored.

Module 4: Data Visualization

- Introduction to visualization, Different types of graphs, Introduction to grammar of graphics & ggplot2 package, Understanding categorical distribution with geom_bar() function, understanding numerical distribution with geom_hist() function, building frequency polygons with geom_freqpoly(), making a scatter-plot with geom_pont() function, multivariate analysis with geom_boxplot, univariate Analysis with Bar-plot, histogram and Density Plot, multivariate distribution, Bar-plots for categorical variables using geom_bar(), adding themes with the theme() layer, visualization with plotly package & building web applications with shinyR, frequency-plots with geom_freqpoly(), multivariate distribution with scatter-plots and smooth lines, continuous vs categorical with box-plots, subgrouping the plots, working with co-ordinates and themes to make the graphs more presentable, Intro to plotly & various plots, visualization with ggvis package, geographic visualization with ggmap(), building web applications with shinyR.

- Hands-on Exercise -Creating data visualization to understand the customer churn ratio using charts using ggplot2, Plotly for importing and analyzing data into grids. You will visualize tenure, monthly charges, total charges and other individual columns by using the scatter plot.

Module 5: Introduction to Statistics

- Why do we need Statistics?, Categories of Statistics, Statistical Terminologies,Types of Data, Measures of Central Tendency, Measures of Spread, Correlation & Covariance,Standardization & Normalization,Probability & Types of Probability, Hypothesis Testing, Chi-Square testing, ANOVA, normal distribution, binary distribution.

- Hands-on Exercise -– Building a statistical analysis model that uses quantifications, representations, experimental data for gathering, reviewing, analyzing and drawing conclusions from data.

Module 6: Machine Learning

- Introduction to Machine Learning, introduction to Linear Regression, predictive modeling with Linear Regression, simple Linear and multiple Linear Regression, concepts and formulas, assumptions and residual diagnostics in Linear Regression, building simple linear model, predicting results and finding p-value, introduction to logistic regression, comparing linear regression and logistics regression, bivariate & multi-variate logistic regression, confusion matrix & accuracy of model, threshold evaluation with ROCR, Linear Regression concepts and detailed formulas, various assumptions of Linear Regression,residuals, qqnorm(), qqline(), understanding the fit of the model, building simple linear model, predicting results and finding p-value, understanding the summary results with Null Hypothesis, p-value & F-statistic, building linear models with multiple independent variables.

- Hands-on Exercise -Modeling the relationship within the data using linear predictor functions. Implementing Linear & Logistics Regression in R by building model with ‘tenure’ as dependent variable and multiple independent variables.

Module 7: Logistic Regression

- Introduction to Logistic Regression, Logistic Regression Concepts, Linear vs Logistic regression, math behind Logistic Regression, detailed formulas, logit function and odds, Bi-variate logistic Regression, Poisson Regression, building simple “binomial” model and predicting result, confusion matrix and Accuracy, true positive rate, false positive rate, and confusion matrix for evaluating built model, threshold evaluation with ROCR, finding the right threshold by building the ROC plot, cross validation & multivariate logistic regression, building logistic models with multiple independent variables, real-life applications of Logistic Regression

- Hands-on Exercise -Implementing predictive analytics by describing the data and explaining the relationship between one dependent binary variable and one or more binary variables. You will use glm() to build a model and use ‘Churn’ as the dependent variable.

Module 8: Decision Trees & Random Forest

- What is classification and different classification techniques, introduction to Decision Tree, algorithm for decision tree induction, building a decision tree in R, creating a perfect Decision Tree, Confusion Matrix, Regression trees vs Classification trees, introduction to ensemble of trees and bagging, Random Forest concept, implementing Random Forest in R, what is Naive Bayes, Computing Probabilities, Impurity Function – Entropy, understand the concept of information gain for right split of node, Impurity Function – Information gain, understand the concept of Gini index for right split of node, Impurity Function – Gini index, understand the concept of Entropy for right split of node, overfitting & pruning, pre-pruning, post-pruning, cost-complexity pruning, pruning decision tree and predicting values, find the right no of trees and evaluate performance metrics.

- Hands-on Exercise -Implementing Random Forest for both regression and classification problems. You will build a tree, prune it by using ‘churn’ as the dependent variable and build a Random Forest with the right number of trees, using ROCR for performance metrics.

Module 9: Unsupervised learning

- What is Clustering & it’s Use Cases, what is K-means Clustering, what is Canopy Clustering, what is Hierarchical Clustering, introduction to Unsupervised Learning, feature extraction & clustering algorithms, k-means clustering algorithm, Theoretical aspects of k-means, and k-means process flow, K-means in R, implementing K-means on the data-set and finding the right no. of clusters using Scree-plot, hierarchical clustering & Dendogram, understand Hierarchical clustering, implement it in R and have a look at Dendograms, Principal Component Analysis, explanation of Principal Component Analysis in detail, PCA in R, implementing PCA in R.

- Hands-on Exercise -Deploying unsupervised learning with R to achieve clustering and dimensionality reduction, K-means clustering for visualizing and interpreting results for the customer churn data.

Module 10: Association Rule Mining & Recommendation Engine

- Introduction to association rule Mining & Market Basket Analysis, measures of Association Rule Mining: Support, Confidence, Lift, Apriori algorithm & implementing it in R, Introduction to Recommendation Engine, user-based collaborative filtering & Item-Based Collaborative Filtering, implementing Recommendation Engine in R, user-Based and item-Based, Recommendation Use-cases.

- Hands-on Exercise -Deploying association analysis as a rule-based machine learning method, identifying strong rules discovered in databases with measures based on interesting discoveries.

Module 11: Introduction to Artificial Intelligence (self paced)

- introducing Artificial Intelligence and Deep Learning, what is an Artificial Neural Network, TensorFlow – computational framework for building AI models, fundamentals of building ANN using TensorFlow, working with TensorFlow in R.

Module 12: Time Series Analysis (self paced)

- What is Time Series, techniques and applications, components of Time Series, moving average, smoothing techniques, exponential smoothing, univariate time series models, multivariate time series analysis, Arima model, Time Series in R, sentiment analysis in R (Twitter sentiment analysis), text analysis.

- Hands-on Exercise -Analyzing time series data, sequence of measurements that follow a non-random order to identify the nature of phenomenon and to forecast the future values in the series.

Module 13: Support Vector Machine – (SVM) (self paced)

- Introduction to Support Vector Machine (SVM), Data classification using SVM, SVM Algorithms using Separable and Inseparable cases, Linear SVM for identifying margin hyperplane.

Module 14: Naïve Bayes (self paced)

- what is Bayes theorem, What is Naïve Bayes Classifier, Classification Workflow, How Naive Bayes classifier works, Classifier building in Scikit-learn, building a probabilistic classification model using Naïve Bayes, Zero Probability Problem.

Module 15: Text Mining (self paced)

- Introduction to concepts of Text Mining, Text Mining use cases, understanding and manipulating text with ‘tm’ & ‘stringR’, Text Mining Algorithms, Quantification of Text, Term Frequency-Inverse Document Frequency (TF-IDF), After TF-IDF.

Module 16: Case Study

- This case study is associated with the modeling technique of Market Basket Analysis where you will learn about loading of data, various techniques for plotting the items and running the algorithms. It includes finding out what are the items that go hand in hand and hence can be clubbed together. This is used for various real world scenarios like a supermarket shopping cart and so on.

- 5 Sections

- 0 Lessons

- 35 Hours

- What kind of employment options can I look forward to once I've finished the Needintech data science course?With specialisations in Python, Kaggle, SQL, and Tableau, our Certification Program will assist you in obtaining roles such as Data Scientist, Data Analyst, Associate Analyst Manager, and Manager.Our training will also enable you to seamlessly transition into any other role of your choosing due to the broad nature of this sector.0

- Why enrol in a Needintech Data Science Course in Chennai?In today’s economy, one of the most sought-after career positions is in data science and analytics. In the field of data science, there are more than 30,000 job vacancies for diverse positions. And skills are what employers are actually looking for. But because this subject is so young, it’s crucial that you enrol in the top Data Science course that is both approachable for beginners and applicable to the workplace. The Data Science and Analytics certification programme offered by upGrad Campus fills this need. This course, which was created especially for college students and newcomers, will have you building predictive models in no time. Learn from start and gain practical experience with Python, Tableau, SQL, Excel, and other Data Science ideas and tools.0

- Are Needintech's Data Science Trainers well-equipped?Needintech Training Institute’s Data Science Mentors are Certified Experts with 10+ years of experience in the Data Science Programming language. Needintech’s Data Science Training Faculty is comprised of real-world Data Science Developers/Programmers who provide students with extensive hands-on experience.0

- What software/tools will be required for the training, and where can I obtain them?All software/tools required for the training will be provided to you as needed during the training.0

- What requirements must one meet in order to enrol in a data science course?The first set of prerequisites for enrolling in our Data Science and Analytics course is a passion for data and problem-solving. The entire course is geared for novices and starts with the very fundamentals, including Python programming. Therefore, having coding experience is not required (but understanding a programming language like Java, Python, or C/C++ is a plus).0

You might be interested in

-

All levels

-

30 Hours

-

0 Lessons

-

All levels

-

30 Hours

-

0 Lessons

-

All levels

-

30 Hours

-

0 Lessons

Related Blogs

How to Build a Full Stack Developer Portfolio from Scratch in 2026 That Actually Gets You Hired

Quick Answer Learning how to build a full stack...

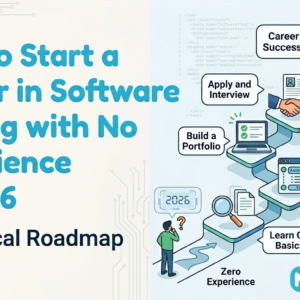

How to Start a Career in Software Testing with No Experience in 2026: A Practical Roadmap

Quick Answer: Absolutely. If you are wondering how to...

Artificial Intelligence Online Training by NeedinTech

Artificial Intelligence Online Training by NeedinTech: The Best Training...

Top Reasons to Study Data Science Course In Needintech

Introduction : Data Science is an interdisciplinary field that...